Once you have deployed a Project Quay registry, there are many ways you can further configure and manage that deployment. Topics covered here include:

-

Advanced Project Quay configuration

-

Setting notifications to alert you of a new Project Quay release

-

Securing connections with SSL/TLS certificates

-

Directing action logs storage to Elasticsearch

-

Configuring image security scanning with Clair

-

Scan pod images with the Container Security Operator

-

Integrate Project Quay into OpenShift Container Platform with the Quay Bridge Operator

-

Mirroring images with repository mirroring

-

Authenticating users with LDAP

-

Enabling Quay for Prometheus and Grafana metrics

-

Setting up geo-replication

-

Troubleshooting Project Quay

For a complete list of Project Quay configuration fields, see the Configure Project Quay page.

Advanced Project Quay configuration

You can configure your Project Quay after initial deployment using one of the following methods:

-

Editing the

config.yamlfile. Theconfig.yamlfile contains most configuration information for the Project Quay cluster. Editing theconfig.yamlfile directly is the primary method for advanced tuning and enabling specific features. -

Using the Project Quay API. Some Project Quay features can be configured through the API.

This content in this section describes how to use each of the aforementioned interfaces and how to configure your deployment with advanced features.

Using the API to modify Project Quay

See the Project Quay API Guide for information on how to access Project Quay API.

Editing the config.yaml file to modify Project Quay

Advanced features can be implemented by editing the config.yaml file directly. All configuration fields for Project Quay features and settings are available in the Project Quay configuration guide.

The following example is one setting that you can change directly in the config.yaml file. Use this example as a reference when editing your config.yaml file for other features and settings.

Adding name and company to Project Quay sign-in

By setting the FEATURE_USER_METADATA field to True, users are prompted for their name and company when they first sign in. This is an optional field, but can provide your with extra data about your Project Quay users.

Use the following procedure to add a name and a company to the Project Quay sign-in page.

-

Add, or set, the

FEATURE_USER_METADATAconfiguration field toTruein yourconfig.yamlfile. For example:

# ...

FEATURE_USER_METADATA: true

# ...-

Redeploy Project Quay.

-

Now, when prompted to log in, users are requested to enter the following information:

Using the configuration API

The configuration tool exposes 4 endpoints that can be used to build, validate, bundle and deploy a configuration. The config-tool API is documented at https://github.com/quay/config-tool/blob/master/pkg/lib/editor/API.md. In this section, you will see how to use the API to retrieve the current configuration and how to validate any changes you make.

Retrieving the default configuration

If you are running the configuration tool for the first time, and do not have an existing configuration, you can retrieve the default configuration. Start the container in config mode:

$ sudo podman run --rm -it --name quay_config \ -p 8080:8080 \ quay.io/projectquay/quay:v3.17.1 config secret

Use the config endpoint of the configuration API to get the default:

$ curl -X GET -u quayconfig:secret http://quay-server:8080/api/v1/config | jq

The value returned is the default configuration in JSON format:

{

"config.yaml": {

"AUTHENTICATION_TYPE": "Database",

"AVATAR_KIND": "local",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DEFAULT_TAG_EXPIRATION": "2w",

"EXTERNAL_TLS_TERMINATION": false,

"FEATURE_ACTION_LOG_ROTATION": false,

"FEATURE_ANONYMOUS_ACCESS": true,

"FEATURE_APP_SPECIFIC_TOKENS": true,

....

}

}Retrieving the current configuration

If you have already configured and deployed the Quay registry, stop the container and restart it in configuration mode, loading the existing configuration as a volume:

$ sudo podman run --rm -it --name quay_config \ -p 8080:8080 \ -v $QUAY/config:/conf/stack:Z \ quay.io/projectquay/quay:v3.17.1 config secret

Use the config endpoint of the API to get the current configuration:

$ curl -X GET -u quayconfig:secret http://quay-server:8080/api/v1/config | jq

The value returned is the current configuration in JSON format, including database and Redis configuration data:

{

"config.yaml": {

....

"BROWSER_API_CALLS_XHR_ONLY": false,

"BUILDLOGS_REDIS": {

"host": "quay-server",

"password": "strongpassword",

"port": 6379

},

"DATABASE_SECRET_KEY": "4b1c5663-88c6-47ac-b4a8-bb594660f08b",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DB_URI": "postgresql://quayuser:quaypass@quay-server:5432/quay",

"DEFAULT_TAG_EXPIRATION": "2w",

....

}

}Validating configuration using the API

You can validate a configuration by posting it to the config/validate endpoint:

curl -u quayconfig:secret --header 'Content-Type: application/json' --request POST --data '

{

"config.yaml": {

....

"BROWSER_API_CALLS_XHR_ONLY": false,

"BUILDLOGS_REDIS": {

"host": "quay-server",

"password": "strongpassword",

"port": 6379

},

"DATABASE_SECRET_KEY": "4b1c5663-88c6-47ac-b4a8-bb594660f08b",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DB_URI": "postgresql://quayuser:quaypass@quay-server:5432/quay",

"DEFAULT_TAG_EXPIRATION": "2w",

....

}

} http://quay-server:8080/api/v1/config/validate | jq

The returned value is an array containing the errors found in the configuration. If the configuration is valid, an empty array [] is returned.

Determining the required fields

You can determine the required fields by posting an empty configuration structure to the config/validate endpoint:

curl -u quayconfig:secret --header 'Content-Type: application/json' --request POST --data '

{

"config.yaml": {

}

} http://quay-server:8080/api/v1/config/validate | jq

The value returned is an array indicating which fields are required:

[

{

"FieldGroup": "Database",

"Tags": [

"DB_URI"

],

"Message": "DB_URI is required."

},

{

"FieldGroup": "DistributedStorage",

"Tags": [

"DISTRIBUTED_STORAGE_CONFIG"

],

"Message": "DISTRIBUTED_STORAGE_CONFIG must contain at least one storage location."

},

{

"FieldGroup": "HostSettings",

"Tags": [

"SERVER_HOSTNAME"

],

"Message": "SERVER_HOSTNAME is required"

},

{

"FieldGroup": "HostSettings",

"Tags": [

"SERVER_HOSTNAME"

],

"Message": "SERVER_HOSTNAME must be of type Hostname"

},

{

"FieldGroup": "Redis",

"Tags": [

"BUILDLOGS_REDIS"

],

"Message": "BUILDLOGS_REDIS is required"

}

]Getting Project Quay release notifications

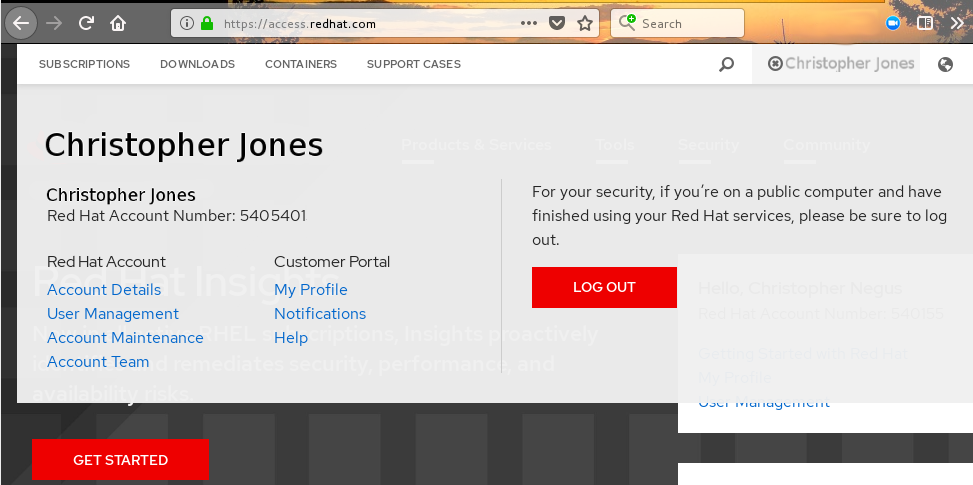

To keep up with the latest Project Quay releases and other changes related to Project Quay, you can sign up for update notifications on the Red Hat Customer Portal. After signing up for notifications, you will receive notifications letting you know when there is new a Project Quay version, updated documentation, or other Project Quay news.

-

Log into the Red Hat Customer Portal with your Red Hat customer account credentials.

-

Select your user name (upper-right corner) to see Red Hat Account and Customer Portal selections:

-

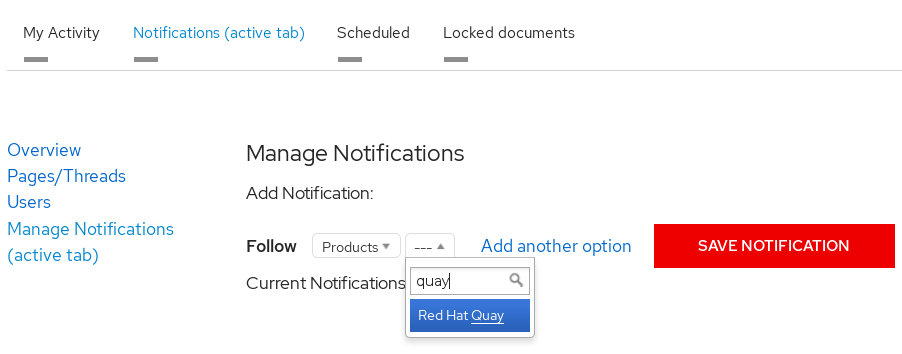

Select Notifications. Your profile activity page appears.

-

Select the Notifications tab.

-

Select Manage Notifications.

-

Select Follow, then choose Products from the drop-down box.

-

From the drop-down box next to the Products, search for and select Project Quay:

-

Select the SAVE NOTIFICATION button. Going forward, you will receive notifications when there are changes to the Project Quay product, such as a new release.

Using SSL to protect connections to Project Quay

Using SSL/TLS

Documentation for Using SSL/TLS has been revised and moved to Securing Project Quay. This chapter will be removed in a future version of Project Quay.

Configuring action log storage for Elasticsearch and Splunk

By default, usage logs are stored in the Project Quay database and exposed through the web UI on organization and repository levels. Appropriate administrative privileges are required to see log entries. For deployments with a large amount of logged operations, you can store the usage logs in Elasticsearch and Splunk instead of the Project Quay database backend.

Configuring action log storage for Elasticsearch

|

Note

|

To configure action log storage for Elasticsearch, you must provide your own Elasticsearch stack; it is not included with Project Quay as a customizable component. |

Enabling Elasticsearch logging can be done during Project Quay deployment or post-deployment by updating your config.yaml file. When configured, usage log access continues to be provided through the web UI for repositories and organizations.

Use the following procedure to configure action log storage for Elasticsearch:

-

Obtain an Elasticsearch account.

-

Update your Project Quay

config.yamlfile to include the following information:# ... LOGS_MODEL: elasticsearch (1) LOGS_MODEL_CONFIG: producer: elasticsearch (2) elasticsearch_config: host: http://<host.elasticsearch.example>:<port> (3) port: 9200 (4) access_key: <access_key> (5) secret_key: <secret_key> (6) use_ssl: True (7) index_prefix: <logentry> (8) aws_region: <us-east-1> (9) # ...-

The method for handling log data.

-

Choose either Elasticsearch or Kinesis to direct logs to an intermediate Kinesis stream on AWS. You need to set up your own pipeline to send logs from Kinesis to Elasticsearch, for example, Logstash.

-

The hostname or IP address of the system providing the Elasticsearch service.

-

The port number providing the Elasticsearch service on the host you just entered. Note that the port must be accessible from all systems running the Project Quay registry. The default is TCP port

9200. -

The access key needed to gain access to the Elasticsearch service, if required.

-

The secret key needed to gain access to the Elasticsearch service, if required.

-

Whether to use SSL/TLS for Elasticsearch. Defaults to

True. -

Choose a prefix to attach to log entries.

-

If you are running on AWS, set the AWS region (otherwise, leave it blank).

-

-

Optional. If you are using Kinesis as your logs producer, you must include the following fields in your

config.yamlfile:kinesis_stream_config: stream_name: <kinesis_stream_name> (1) access_key: <aws_access_key> (2) secret_key: <aws_secret_key> (3) aws_region: <aws_region> (4)-

The name of the Kinesis stream.

-

The name of the AWS access key needed to gain access to the Kinesis stream, if required.

-

The name of the AWS secret key needed to gain access to the Kinesis stream, if required.

-

The Amazon Web Services (AWS) region.

-

-

Save your

config.yamlfile and restart your Project Quay deployment.

Configuring action log storage for Splunk

Splunk is an alternative to Elasticsearch that can provide log analyses for your Project Quay data.

Enabling Splunk logging can be done during Project Quay deployment or post-deployment using the configuration tool. Configuration includes both the option to forward action logs directly to Splunk or to the Splunk HTTP Event Collector (HEC).

Use the following procedures to enable Splunk for your Project Quay deployment.

Installing and creating a username for Splunk

Use the following procedure to install and create Splunk credentials.

-

Create a Splunk account by navigating to Splunk and entering the required credentials.

-

Navigate to the Splunk Enterprise Free Trial page, select your platform and installation package, and then click Download Now.

-

Install the Splunk software on your machine. When prompted, create a username, for example,

splunk_adminand password. -

After creating a username and password, a localhost URL will be provided for your Splunk deployment, for example,

http://<sample_url>.remote.csb:8000/. Open the URL in your preferred browser. -

Log in with the username and password you created during installation. You are directed to the Splunk UI.

Generating a Splunk bearer token

Use one of the following procedures to create a bearer token for Splunk.

Generating a Splunk bearer token using the Splunk UI

Use the following procedure to create a bearer token for Splunk using the Splunk UI.

-

You have installed Splunk and created a username.

-

On the Splunk UI, navigate to Settings → Tokens.

-

Click Enable Token Authentication.

-

Ensure that Token Authentication is enabled by clicking Token Settings and selecting Token Authentication if necessary.

-

Optional: Set the expiration time for your token. This defaults at 30 days.

-

Click Save.

-

Click New Token.

-

Enter information for User and Audience.

-

Optional: Set the Expiration and Not Before information.

-

Click Create. Your token appears in the Token box. Copy the token immediately.

ImportantIf you close out of the box before copying the token, you must create a new token. The token in its entirety is not available after closing the New Token window.

Generating a Splunk bearer token using the CLI

Use the following procedure to create a bearer token for Splunk using the CLI.

-

You have installed Splunk and created a username.

-

In your CLI, enter the following

CURLcommand to enable token authentication, passing in your Splunk username and password:$ curl -k -u <username>:<password> -X POST <scheme>://<host>:<port>/services/admin/token-auth/tokens_auth -d disabled=false -

Create a token by entering the following

CURLcommand, passing in your Splunk username and password.$ curl -k -u <username>:<password> -X POST <scheme>://<host>:<port>/services/authorization/tokens?output_mode=json --data name=<username> --data audience=Users --data-urlencode expires_on=+30d -

Save the generated bearer token.

Generating an HEC ingest token

Obtain an HEC ingest token for Splunk HEC by using the Splunk web UI or the Splunk REST API.

|

Note

|

Splunk HEC tokens are ingest-only and cannot search. |

-

You have installed Splunk and created a username.

-

To create an HEC token using the Splunk web UI:

-

Log in to the Splunk via the web UI.

-

Click Settings → Data Inputs → HTTP Event Collector.

-

Click New Token.

-

Name the token, for example,

quay-hec, and select the target index, for example,quay_logs. -

Click Submit and copy the token value.

-

-

To create an HEC token using the Splunk REST API:

-

Enable HEC by entering the following command:

$ curl -k -u <username>:<password> \ https://<splunk.example.com>:8089/servicesNS/admin/splunk_httpinput/data/inputs/http/http \ -d "disabled=0" -

Create an HEC token by entering the following command:

$ curl -k -u <username>:<password> \ "https://<splunk.example.com>:8089/servicesNS/admin/splunk_httpinput/data/inputs/http?output_mode=json" \ -d "name=quay-hec" -d "index=quay_logs"Example output:{"entry":[{"content":{"token":"<your_bearer_token>"}}]}

-

Configuring Project Quay to use Splunk

Use the following procedure to configure Project Quay to use Splunk or the Splunk HTTP Event Collector (HEC).

-

You have installed Splunk and created a username.

-

You have generated a Splunk bearer token.

-

Configure Project Quay to use Splunk or the Splunk HTTP Event Collector (HEC).

-

If opting to use Splunk, open your Project Quay

config.yamlfile and add the following configuration fields:# ... LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk splunk_config: host: http://<user_name>.remote.csb (1) port: 8089 (2) bearer_token: <bearer_token> (3) url_scheme: <http/https> (4) verify_ssl: False (5) index_prefix: <splunk_log_index_name> (6) ssl_ca_path: <location_to_ssl-ca-cert.pem> (7) search_timeout: 60 (8) max_results: 10000 (9) export_batch_size: 5000 (10) # ...-

String. The Splunk cluster endpoint.

-

Integer. The Splunk management cluster endpoint port. Differs from the Splunk GUI hosted port. Can be found on the Splunk UI under Settings → Server Settings → General Settings.

-

String. The generated bearer token for Splunk.

-

String. The URL scheme for access the Splunk service. If Splunk is configured to use TLS/SSL, this must be

https. -

Boolean. Whether to enable TLS/SSL. Defaults to

True. -

String. The Splunk index prefix. Can be a new, or used, index. Can be created from the Splunk UI.

-

String. The relative container path to a single

.pemfile containing a certificate authority (CA) for TLS/SSL validation. -

Integer. The timeout for Splunk search queries in seconds. Increase for slow Splunk clusters or complex queries.

-

Integer. The maximum number of results to return per search query. Larger values require more memory.

-

Integer. The batch size for log export operations.

-

-

If opting to use Splunk HEC, open your Project Quay

config.yamlfile and add the following configuration fields:# ... LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk_hec (1) splunk_hec_config: (2) host: prd-p-aaaaaq.splunkcloud.com (3) port: 8088 (4) hec_token: 12345678-1234-1234-1234-1234567890ab (5) url_scheme: https (6) verify_ssl: False (7) index: quay (8) splunk_host: quay-dev (9) splunk_sourcetype: quay_logs (10) timeout: 10 (11) search_token: <bearer_token> (12) search_host: <splunk.example.com> (13) search_port: 8089 (14) search_timeout: 60 (15) max_results: 10000 (16) export_batch_size: 5000 (17) # ...-

Specify

splunk_hecwhen configuring Splunk HEC. -

Logs model configuration for Splunk HTTP event collector action logs configuration.

-

The Splunk cluster endpoint.

-

Splunk management cluster endpoint port.

-

HEC token for Splunk.

-

The URL scheme for access the Splunk service. If Splunk is behind SSL/TLS, must be

https. -

Boolean. Enable (true) or disable (false) SSL/TLS verification for HTTPS connections.

-

The Splunk index to use.

-

The host name to log this event.

-

The name of the Splunk

sourcetypeto use. -

Timeout in seconds for HTTP requests to Splunk HEC endpoint. Prevents requests from hanging indefinitely when Splunk is unresponsive.

-

String. Optional. Bearer token for Splunk search API. Required because HEC tokens are ingest-only and cannot search. For more information, see Manage HEC tokens.

-

String. Splunk management host for search API. Defaults to HEC host if not specified.

-

Integer. Splunk management port for search API. Defaults to 8089 if not specified.

-

Integer. The timeout for Splunk search queries in seconds. Increase for slow Splunk clusters or complex queries.

-

Integer. The maximum number of results to return per search query. Larger values require more memory.

-

Integer. The batch size for log export operations.

-

-

-

If you are configuring

ssl_ca_path, you must configure the SSL/TLS certificate so that Project Quay will trust it.-

If you are using a standalone deployment of Project Quay, SSL/TLS certificates can be provided by placing the certificate file inside of the

extra_ca_certsdirectory, or inside of the relative container path and specified byssl_ca_path. -

If you are using the Project Quay Operator, create a config bundle secret, including the certificate authority (CA) of the Splunk server. For example:

$ oc create secret generic --from-file config.yaml=./config_390.yaml --from-file extra_ca_cert_splunkserver.crt=./splunkserver.crt config-bundle-secretSpecify the

conf/stack/extra_ca_certs/splunkserver.crtfile in yourconfig.yaml. For example:# ... LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk splunk_config: host: ec2-12-345-67-891.us-east-2.compute.amazonaws.com port: 8089 bearer_token: eyJra url_scheme: https verify_ssl: true index_prefix: quay123456 ssl_ca_path: conf/stack/splunkserver.crt # ...

-

Creating an action log

Use the following procedure to create a user account that can forward action logs to Splunk.

-

You have installed Splunk and created a username.

-

You have generated a Splunk bearer token.

-

You have configured your Project Quay

config.yamlfile to enable Splunk.

-

Log in to your Project Quay deployment.

-

Click on the name of the organization that you will use to create an action log for Splunk.

-

In the navigation pane, click Robot Accounts → Create Robot Account.

-

When prompted, enter a name for the robot account, for example

spunkrobotaccount, then click Create robot account. -

On your browser, open the Splunk UI.

-

Click Search and Reporting.

-

In the search bar, enter the name of your index, for example,

<splunk_log_index_name>and press Enter.The search results populate on the Splunk UI. Logs are forwarded in JSON format. A response might look similar to the following:

{ "log_data": { "kind": "authentication", (1) "account": "quayuser123", (2) "performer": "John Doe", (3) "repository": "projectQuay", (4) "ip": "192.168.1.100", (5) "metadata_json": {...}, (6) "datetime": "2024-02-06T12:30:45Z" (7) } }-

Specifies the type of log event. In this example,

authenticationindicates that the log entry relates to an authentication event. -

The user account involved in the event.

-

The individual who performed the action.

-

The repository associated with the event.

-

The IP address from which the action was performed.

-

Might contain additional metadata related to the event.

-

The timestamp of when the event occurred.

-

Displaying Splunk audit logs in the Project Quay UI

When you enable Splunk log forwarding in Project Quay, you can view and query audit logs in the organization, repository, and superuser admin panels. This read capability matches other log backends so you can review audit logs in the UI instead of using Splunk directly.

-

You have created an

hec_token.NoteFor the HEC producer, two tokens are required:

hec_tokenfor writing logs andsearch_tokenfor reading logs in the UI. Thesearch_tokenis a bearer token (the same type you create in "Generating a Splunk bearer token"). HEC tokens are ingest-only and cannot run searches. -

You have configured Quay to forward action logs to Splunk

-

Update your

config.yamlfile:-

To display Splunk SDK audit logs on the Project Quay UI, use the following reference:

LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk splunk_config: host: <splunk.example.com> port: 8089 bearer_token: <your_bearer_token> url_scheme: https verify_ssl: false index_prefix: quay_logs search_timeout: 60 max_results: 10000 export_batch_size: 5000where:

<splunk.example.com>-

Specifies the host name of your Splunk instance.

<your_bearer_token>-

Specifies the bearer token you generated in the "Generating a Splunk bearer token" guide.

index_prefix-

Specifies the Splunk index prefix.

-

-

To display Splunk HEC logs on the Project Quay UI, include the generated

search_tokenandhec_token. For example:LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk_hec splunk_hec_config: host: <splunk.example.com> port: 8088 hec_token: <your_hec_token> search_token: <your_bearer_token> url_scheme: https verify_ssl: true ssl_ca_path: conf/stack/ca.pem index: quay_logs splunk_host: <quay-server.example.com> splunk_sourcetype: access_combined timeout: 10 search_host: <splunk.example.com> search_port: 8089 search_timeout: 60 max_results: 10000 export_batch_size: 5000where:

<splunk.example.com>-

Specifies the host name of your Splunk instance (used for both the HEC endpoint and the search API).

8088-

Specifies the port number for the Splunk HEC endpoint.

<your_hec_token>-

Specifies the HEC token you generated in the "Generating a Splunk HEC token" guide.

<your_bearer_token>-

Optional. Specifies the bearer token you generated in the "Generating a Splunk bearer token" guide.

<quay-server.example.com>-

Specifies the host name of your Project Quay instance.

-

Restart your Project Quay instance to apply the changes.

-

Push an example image to your Project Quay instance to generate an audit log by entering the following command. Note that you can push to an organization or a repository.

$ podman push <quay-server.example.com>/<organization_name>/busybox:test -

On the Project Quay UI, open the Logs view in one of these places:

-

Organizations → <organization_name> → Logs

-

Repositories → <organization_name> / <repository_name> → Logs

-

Superuser → Usage Logs

-

-

The

busybox:testSplunk audit is available.

Understanding usage logs

By default, usage logs are stored in the Project Quay database. They are exposed through the web UI, on the organization and repository levels, and in the Superuser Admin Panel.

Database logs capture a wide ranges of events in Project Quay, such as the changing of account plans, user actions, and general operations. Log entries include information such as the action performed (kind_id), the user who performed the action (account_id or performer_id), the timestamp (datetime), and other relevant data associated with the action (metadata_json).

Viewing database logs

The following procedure shows you how to view repository logs that are stored in a PostgreSQL database.

-

You have administrative privileges.

-

You have installed the

psqlCLI tool.

-

Enter the following command to log in to your Project Quay PostgreSQL database:

$ psql -h <quay-server.example.com> -p 5432 -U <user_name> -d <database_name>Example outputpsql (16.1, server 13.7) Type "help" for help. -

Optional. Enter the following command to display the tables list of your PostgreSQL database:

quay=> \dtExample outputList of relations Schema | Name | Type | Owner --------+----------------------------+-------+---------- public | logentry | table | quayuser public | logentry2 | table | quayuser public | logentry3 | table | quayuser public | logentrykind | table | quayuser ... -

You can enter the following command to return a list of

repository_idsthat are required to return log information:quay=> SELECT id, name FROM repository;Example outputid | name ----+--------------------- 3 | new_repository_name 6 | api-repo 7 | busybox ... -

Enter the following command to use the

logentry3relation to show log information about one of your repositories:SELECT * FROM logentry3 WHERE repository_id = <repository_id>;Example outputid | kind_id | account_id | performer_id | repository_id | datetime | ip | metadata_json 59 | 14 | 2 | 1 | 6 | 2024-05-13 15:51:01.897189 | 192.168.1.130 | {"repo": "api-repo", "namespace": "test-org"}In the above example, the following information is returned:

{ "log_data": { "id": 59 (1) "kind_id": "14", (2) "account_id": "2", (3) "performer_id": "1", (4) "repository_id": "6", (5) "ip": "192.168.1.100", (6) "metadata_json": {"repo": "api-repo", "namespace": "test-org"} (7) "datetime": "2024-05-13 15:51:01.897189" (8) } }-

The unique identifier for the log entry.

-

The action that was done. In this example, it was

14. The key, or table, in the following section shows you that thiskind_idis related to the creation of a repository. -

The account that performed the action.

-

The performer of the action.

-

The repository that the action was done on. In this example,

6correlates to theapi-repothat was discovered in Step 3. -

The IP address where the action was performed.

-

Metadata information, including the name of the repository and its namespace.

-

The time when the action was performed.

-

Log entry kind_ids

The following table represents the kind_ids associated with Project Quay actions.

| kind_id | Action | Description |

|---|---|---|

1 |

account_change_cc |

Change of credit card information. |

2 |

account_change_password |

Change of account password. |

3 |

account_change_plan |

Change of account plan. |

4 |

account_convert |

Account conversion. |

5 |

add_repo_accesstoken |

Adding an access token to a repository. |

6 |

add_repo_notification |

Adding a notification to a repository. |

7 |

add_repo_permission |

Adding permissions to a repository. |

8 |

add_repo_webhook |

Adding a webhook to a repository. |

9 |

build_dockerfile |

Building a Dockerfile. |

10 |

change_repo_permission |

Changing permissions of a repository. |

11 |

change_repo_visibility |

Changing the visibility of a repository. |

12 |

create_application |

Creating an application. |

13 |

create_prototype_permission |

Creating permissions for a prototype. |

14 |

create_repo |

Creating a repository. |

15 |

create_robot |

Creating a robot (service account or bot). |

16 |

create_tag |

Creating a tag. |

17 |

delete_application |

Deleting an application. |

18 |

delete_prototype_permission |

Deleting permissions for a prototype. |

19 |

delete_repo |

Deleting a repository. |

20 |

delete_repo_accesstoken |

Deleting an access token from a repository. |

21 |

delete_repo_notification |

Deleting a notification from a repository. |

22 |

delete_repo_permission |

Deleting permissions from a repository. |

23 |

delete_repo_trigger |

Deleting a repository trigger. |

24 |

delete_repo_webhook |

Deleting a webhook from a repository. |

25 |

delete_robot |

Deleting a robot. |

26 |

delete_tag |

Deleting a tag. |

27 |

manifest_label_add |

Adding a label to a manifest. |

28 |

manifest_label_delete |

Deleting a label from a manifest. |

29 |

modify_prototype_permission |

Modifying permissions for a prototype. |

30 |

move_tag |

Moving a tag. |

31 |

org_add_team_member |

Adding a member to a team. |

32 |

org_create_team |

Creating a team within an organization. |

33 |

org_delete_team |

Deleting a team within an organization. |

34 |

org_delete_team_member_invite |

Deleting a team member invitation. |

35 |

org_invite_team_member |

Inviting a member to a team in an organization. |

36 |

org_remove_team_member |

Removing a member from a team. |

37 |

org_set_team_description |

Setting the description of a team. |

38 |

org_set_team_role |

Setting the role of a team. |

39 |

org_team_member_invite_accepted |

Acceptance of a team member invitation. |

40 |

org_team_member_invite_declined |

Declining of a team member invitation. |

41 |

pull_repo |

Pull from a repository. |

42 |

push_repo |

Push to a repository. |

43 |

regenerate_robot_token |

Regenerating a robot token. |

44 |

repo_verb |

Generic repository action (specifics might be defined elsewhere). |

45 |

reset_application_client_secret |

Resetting the client secret of an application. |

46 |

revert_tag |

Reverting a tag. |

47 |

service_key_approve |

Approving a service key. |

48 |

service_key_create |

Creating a service key. |

49 |

service_key_delete |

Deleting a service key. |

50 |

service_key_extend |

Extending a service key. |

51 |

service_key_modify |

Modifying a service key. |

52 |

service_key_rotate |

Rotating a service key. |

53 |

setup_repo_trigger |

Setting up a repository trigger. |

54 |

set_repo_description |

Setting the description of a repository. |

55 |

take_ownership |

Taking ownership of a resource. |

56 |

update_application |

Updating an application. |

57 |

change_repo_trust |

Changing the trust level of a repository. |

58 |

reset_repo_notification |

Resetting repository notifications. |

59 |

change_tag_expiration |

Changing the expiration date of a tag. |

60 |

create_app_specific_token |

Creating an application-specific token. |

61 |

revoke_app_specific_token |

Revoking an application-specific token. |

62 |

toggle_repo_trigger |

Toggling a repository trigger on or off. |

63 |

repo_mirror_enabled |

Enabling repository mirroring. |

64 |

repo_mirror_disabled |

Disabling repository mirroring. |

65 |

repo_mirror_config_changed |

Changing the configuration of repository mirroring. |

66 |

repo_mirror_sync_started |

Starting a repository mirror sync. |

67 |

repo_mirror_sync_failed |

Repository mirror sync failed. |

68 |

repo_mirror_sync_success |

Repository mirror sync succeeded. |

69 |

repo_mirror_sync_now_requested |

Immediate repository mirror sync requested. |

70 |

repo_mirror_sync_tag_success |

Repository mirror tag sync succeeded. |

71 |

repo_mirror_sync_tag_failed |

Repository mirror tag sync failed. |

72 |

repo_mirror_sync_test_success |

Repository mirror sync test succeeded. |

73 |

repo_mirror_sync_test_failed |

Repository mirror sync test failed. |

74 |

repo_mirror_sync_test_started |

Repository mirror sync test started. |

75 |

change_repo_state |

Changing the state of a repository. |

76 |

create_proxy_cache_config |

Creating proxy cache configuration. |

77 |

delete_proxy_cache_config |

Deleting proxy cache configuration. |

78 |

start_build_trigger |

Starting a build trigger. |

79 |

cancel_build |

Cancelling a build. |

80 |

org_create |

Creating an organization. |

81 |

org_delete |

Deleting an organization. |

82 |

org_change_email |

Changing organization email. |

83 |

org_change_invoicing |

Changing organization invoicing. |

84 |

org_change_tag_expiration |

Changing organization tag expiration. |

85 |

org_change_name |

Changing organization name. |

86 |

user_create |

Creating a user. |

87 |

user_delete |

Deleting a user. |

88 |

user_disable |

Disabling a user. |

89 |

user_enable |

Enabling a user. |

90 |

user_change_email |

Changing user email. |

91 |

user_change_password |

Changing user password. |

92 |

user_change_name |

Changing user name. |

93 |

user_change_invoicing |

Changing user invoicing. |

94 |

user_change_tag_expiration |

Changing user tag expiration. |

95 |

user_change_metadata |

Changing user metadata. |

96 |

user_generate_client_key |

Generating a client key for a user. |

97 |

login_success |

Successful login. |

98 |

logout_success |

Successful logout. |

99 |

permanently_delete_tag |

Permanently deleting a tag. |

100 |

autoprune_tag_delete |

Auto-pruning tag deletion. |

101 |

create_namespace_autoprune_policy |

Creating namespace auto-prune policy. |

102 |

update_namespace_autoprune_policy |

Updating namespace auto-prune policy. |

103 |

delete_namespace_autoprune_policy |

Deleting namespace auto-prune policy. |

104 |

login_failure |

Failed login attempt. |

About Clair

Clair uses Common Vulnerability Scoring System (CVSS) data from the National Vulnerability Database (NVD) to enrich vulnerability data, which is a United States government repository of security-related information, including known vulnerabilities and security issues in various software components and systems. Using scores from the NVD provides Clair the following benefits:

-

Data synchronization. Clair can periodically synchronize its vulnerability database with the NVD. This ensures that it has the latest vulnerability data.

-

Matching and enrichment. Clair compares the metadata and identifiers of vulnerabilities it discovers in container images with the data from the NVD. This process involves matching the unique identifiers, such as Common Vulnerabilities and Exposures (CVE) IDs, to the entries in the NVD. When a match is found, Clair can enrich its vulnerability information with additional details from NVD, such as severity scores, descriptions, and references.

-

Severity Scores. The NVD assigns severity scores to vulnerabilities, such as the Common Vulnerability Scoring System (CVSS) score, to indicate the potential impact and risk associated with each vulnerability. By incorporating NVD’s severity scores, Clair can provide more context on the seriousness of the vulnerabilities it detects.

If Clair finds vulnerabilities from NVD, a detailed and standardized assessment of the severity and potential impact of vulnerabilities detected within container images is reported to users on the UI. CVSS enrichment data provides Clair the following benefits:

-

Vulnerability prioritization. By utilizing CVSS scores, users can prioritize vulnerabilities based on their severity, helping them address the most critical issues first.

-

Assess Risk. CVSS scores can help Clair users understand the potential risk a vulnerability poses to their containerized applications.

-

Communicate Severity. CVSS scores provide Clair users a standardized way to communicate the severity of vulnerabilities across teams and organizations.

-

Inform Remediation Strategies. CVSS enrichment data can guide Quay.io users in developing appropriate remediation strategies.

-

Compliance and Reporting. Integrating CVSS data into reports generated by Clair can help organizations demonstrate their commitment to addressing security vulnerabilities and complying with industry standards and regulations.

Documentation for installing and configuring Clair can be found in Vulnerability reporting with Clair on Project Quay.

Mirroring images with Project Quay

To mirror images from external registries into your Project Quay cluster and synchronize by repository or organization names and tags, you can repository mirroring.

From your Project Quay cluster with mirroring enabled, you can perform the following:

-

Choose a repository or organization from an external registry to mirror

-

Add credentials to access the external registry

-

Identify specific container image repository or organization names and tags to sync

-

Set intervals at which a repository or organization is synced

-

Check the current state of synchronization

-

Filter the architectures that are mirrored

To use the mirroring functionality, you need to perform the following actions:

-

Enable mirroring in the Project Quay configuration file

-

Run a mirroring worker

-

Create mirrored repositories

All mirroring configurations can be performed by using the configuration tool UI or by the Project Quay API.

Mirroring compared to geo-replication

To replicate your entire image storage backend data across multiple environments, configure Project Quay geo-replication. Synchronizing different storage backends while sharing a central database ensures consistent, highly available access to your registry.

An example of Project Quay geo-replication mirrors is where one Project Quay registry has two different blob storage endpoints.

The primary use cases for geo-replication include the following:

-

Speeding up access to the binary blobs for geographically dispersed setups

-

Guaranteeing that the image content is the same across regions

Mirroring synchronizes selected repositories, or subsets of repositories, from one registry to another. The registries are distinct, with each registry having a separate database and separate image storage.

The primary use cases for mirroring include the following:

-

Independent registry deployments in different data centers or regions, where a certain subset of the overall content is supposed to be shared across the data centers and regions

-

Automatic synchronization or mirroring of selected (allowlisted) upstream repositories from external registries into a local Project Quay deployment

|

Note

|

Mirroring and geo-replication can be used simultaneously. |

| Feature / Capability | Geo-replication | Mirroring |

|---|---|---|

What is the feature designed to do? |

A shared, global registry |

Distinct, different registries |

What happens if replication or mirroring has not been completed yet? |

The remote copy is used (slower) |

No image is served |

Is access to all storage backends in both regions required? |

Yes (all Project Quay nodes) |

No (distinct storage) |

Can users push images from both sites to the same repository or organization? |

Yes |

No |

Is all registry content and configuration identical across all regions (shared database)? |

Yes |

No |

Can users select individual namespaces or repositories to be mirrored? |

No |

Yes |

Can users apply filters to synchronization rules? |

No |

Yes |

Are individual / different role-base access control configurations allowed in each region |

No |

Yes |

Using mirroring

To automatically synchronize container images across different environments, configure mirroring for your Project Quay repository or organization. By reviewing the supported features and limitations of this capability, you can correctly plan your registry synchronization architecture.

The following list shows features and limitations of Project Quay mirroring for a repository or organization.

|

Note

|

The word entity is used in the mirroring documentation to refer to either a repository or organization. |

-

With mirroring, you can mirror an entire entity or selectively limit which images are synced. Filters can be based on a comma-separated list of tags, a range of tags, or other means of identifying tags through Unix shell-style wildcards. For more information, see the documentation for wildcards.

-

After you set mirroring for an entity, you cannot manually add other images to that entity.

-

Because the mirrored entity is based on the entity and the tags that you set, the entity holds only the content represented by the entity and tag pair. For example if you change the tag so that some images in the entity no longer match, those images will be deleted.

-

Only the designated robot can push images to a mirrored entity, superseding any role-based access control permissions set on the entity.

-

Mirroring can be configured to rollback on failure, or to run on a best-effort basis.

-

With a mirrored entity, a user with read permissions can pull images from the entity but cannot push images to the entity.

-

Changing settings on your mirrored entity can be performed in the Project Quay user interface.

-

Images are synced at set intervals, but can also be synced on demand.

Creating a mirroring worker worker

To use mirroring in a standalone deployment of Project Quay, you must create a mirroring worker by running a Podman container with the repomirror option. Mount your /root/ca.crt CA bundle when you use TLS with that certificate.

-

If you have not configured TLS communications using a

/root/ca.crtcertificate, enter the following command to start a mirroring worker:$ sudo podman run -d --name mirroring-worker \ -v $QUAY/config:/conf/stack:Z \ quay.io/projectquay/quay:v3.17.1 repomirror

-

If you have configured TLS communications using a

/root/ca.crtcertificate, enter the following command to start the repository mirroring worker:$ sudo podman run -d --name mirroring-worker \ -v $QUAY/config:/conf/stack:Z \ -v /root/ca.crt:/etc/pki/ca-trust/source/anchors/ca.crt:Z \ quay.io/projectquay/quay:v3.17.1 repomirror

Enabling organization mirroring for Project Quay

To use mirrored organizations, you can set FEATURE_ORG_MIRROR to true in your config.yaml file. Restart the registry after you update the configuration.

-

To enable organization mirroring, set the following configuration fields in your

config.yamlfile:# ... FEATURE_PROXY_CACHE: true FEATURE_REPO_MIRROR: true FEATURE_ORG_MIRROR: true ORG_MIRROR_INTERVAL: 60 ORG_MIRROR_BATCH_SIZE: 100 ORG_MIRROR_MAX_SYNC_DURATION: 3600 ORG_MIRROR_DEFAULT_SKOPEO_TIMEOUT: 600 ORG_MIRROR_DISCOVERY_TIMEOUT: 600 ORG_MIRROR_MAX_REPOS_PER_ORG: 5000 ORG_MIRROR_MAX_RETRIES: 3 SSRF_ALLOWED_HOSTS: - harbor.example.lab # ...where:

FEATURE_PROXY_CACHE-

Specifies whether to enable or disable proxy caching. This field must be set to

trueto use the organization mirroring feature. FEATURE_REPO_MIRROR-

Specifies whether to enable or disable repository-level mirroring. This field must be set to

trueto use the organization mirroring feature. FEATURE_ORG_MIRROR-

Specifies whether to enable or disable organization-level mirroring.

ORG_MIRROR_INTERVAL-

Specifies the worker processing interval in seconds.

ORG_MIRROR_BATCH_SIZE-

Specifies the number of organization mirrors to process for each iteration.

ORG_MIRROR_MAX_SYNC_DURATION-

Specifies the maximum sync duration in seconds.

ORG_MIRROR_DEFAULT_SKOPEO_TIMEOUT-

Specifies the default skopeo timeout in seconds.

ORG_MIRROR_DISCOVERY_TIMEOUT-

Specifies the discovery timeout in seconds.

ORG_MIRROR_MAX_REPOS_PER_ORG-

Specifies the maximum repositories to discover for each organization.

ORG_MIRROR_MAX_RETRIES-

Specifies the maximum sync retries for a failure operation.

SSRF_ALLOWED_HOSTS-

Specifies the allowed hosts for the Server Side Request Forgery (SSRF) protection. Use optional field to allow specific hosts to be accessed by the registry.

-

Restart your Project Quay registry.

Creating a mirroring organization by using the UI

To automatically synchronize container images between registries, you can use the Project Quay UI to create a mirroring organization. Configuring this automated synchronization ensures that your deployment always has access to the most up-to-date container files.

|

Note

|

Organization-level mirroring cannot be configured on an existing organization that already contains repositories. A dedicated organization must be created specifically to serve as a mirror target, with all repositories within the organization managed exclusively by the mirroring configuration. |

-

You have a Quay organization with sufficient permissions.

-

You have created a robot account.

-

You have access to a source Harbor instance.

-

You have Harbor credentials, such as a username and a password or an API token.

-

-

You have set

FEATURE_ORG_MIRROR: truein yourconfig.yamlfile. -

You have set

FEATURE_PROXY_CACHE: truein yourconfig.yamlfile. -

You have set

FEATURE_REPO_MIRROR: truein yourconfig.yamlfile. -

For standalone Project Quay deployments, you have created a mirroring worker.

-

If you are using an OAuth token to mirror from Quay to Quay, your token must have the following permission:

-

Administer Repositories

-

View all visible repositories

-

Read/Write to any accessible repositories

-

Administer User

-

-

On the Project Quay v2 UI, click Organizations in the navigation pane.

-

Find your organization listed under the Name column and then click on the name of the organization.

-

Click Settings → Organization state.

-

Click the Mirror radio button to set the organization state to mirroring.

-

Click the Submit button. Completion of this step takes you to the Mirroring tab.

-

Under the Source Registry section, complete the following settings:

-

For Source Registry Type field, select Quay or Harbor.

-

In the Source Registry URL field, enter a valid URL, for example,

https://registry.example.com. -

For Source Namespace, enter the namespace or project name on the source registry. For example,

my-project. -

Select Private or Public for Repository Visibility.

-

For Start Date, set the date in

yyyy-mm-ddformat and set the time. -

Set the Sync interval. Set the integer in the box and select seconds, minutes, hours, days, or weeks from the drop-down menu.

-

Set the Skopeo Timeout value for Skopeo operations.

-

Select a Robot User from the drop-down menu.

-

Set any desired Filter Patterns.

-

In the Credentials section, do one of the following actions:

-

If you specified Harbor for Source Registry Type, enter your username and password for the source registry.

-

If you specified Quay for Source Registry Type, you must specify

$oauthtokenfor the Username field andOAUTH_TOKENfor the Password field.

-

-

Optional: In Advanced Settings:

-

For Verify TLS, click the checkbox to verify certificates.

-

Set an HTTP Proxy URL.

-

Set an HTTPS Proxy URL.

-

Set a No Proxy URL.

-

-

When you have configured the desired settings, click Enable Organization Mirror.

-

On the Project Quay web console, click Organizations → the organization name → Mirroring.

-

Scroll to the Status section to view the status and verify the connection.

Creating a mirrored organization by using the API

To create a mirrored organization in Project Quay by using the API, you can send HTTP requests to the organization mirror API endpoints with curl and an OAuth bearer token. You can create and update mirror settings, read the configuration and mirrored repositories, start or cancel a sync, verify the external registry, and delete the setup.

|

Note

|

Organization-level mirroring cannot be configured on an existing organization that already contains repositories. A dedicated organization must be created specifically to serve as a mirror target, with all repositories within the organization managed exclusively by the mirroring configuration. |

-

You have generated an OAuth access token.

-

Use the

POST /api/v1/organization/{orgname}/mirrorendpoint to create a new organization-level mirroring configuration:$ curl -X POST "https://<quay-server.example.com>/api/v1/organization/<orgname>/mirror" \ -H "Authorization: Bearer <access_token>" \ -H "Accept: application/json" \ -H "Content-Type: application/json" \ -d '{ "external_registry_type": "quay", "external_registry_url": "https://quay.io", "external_namespace": "<external_namespace>", "robot_username": "<orgname>+<robot_account>", "visibility": "private", "sync_interval": 3600, "sync_start_date": "2025-01-01T00:00:00Z", "is_enabled": true }' -

Use the

GET /api/v1/organization/{orgname}/mirrorendpoint to retrieve the organization-level mirroring configuration:$ curl -X GET \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirrorExample output{"is_enabled": true, "external_registry_type": "quay", "external_registry_url": "http://quay.io", "external_namespace": "test", "external_registry_username": null, "external_registry_config": {}, "repository_filters": [], "robot_username": "example+test", "visibility": "private", "sync_interval": 3600, "sync_start_date": "2025-01-01T00:00:00Z", "sync_expiration_date": null, "sync_status": "NEVER_RUN", "sync_retries_remaining": 3, "skopeo_timeout": 300, "creation_date": "2026-03-09T18:39:21.993431Z"} -

Use the

GET /api/v1/organization/{orgname}/mirror/repositoriesendpoint to obtain a list of repositories that are being mirrored in the organization:$ curl -X GET \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ "https://<quay-server.example.com>/api/v1/organization/<orgname>/mirror/repositories?page=1&limit=100"Example output{"repositories": [], "page": 1, "limit": 100, "total": 0, "has_next": false} -

Use the

POST /api/v1/organization/{orgname}/mirror/sync-nowendpoint to trigger an immediate sync of all repositories in the organization:$ curl -X POST \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirror/sync-nowThis command does not return output in the CLI.

-

Use the

POST /api/v1/organization/{orgname}/mirror/sync-cancelendpoint to cancel a pending sync of all repositories in the organization:$ curl -X POST \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirror/sync-cancelThis command does not return output in the CLI.

-

Use the

PUT /api/v1/organization/{orgname}/mirrorendpoint to update the organization-level mirroring configuration:$ curl -X PUT \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ -H "Content-Type: application/json" \ -d '{"is_enabled": true, "sync_interval": 7200}' \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirrorExample output" " -

Use the

POST /api/v1/organization/{orgname}/mirror/verifyendpoint to verify the connection to the external registry for the organization-level mirroring configuration:$ curl -X POST \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirror/verifyExample output{"success": false, "message": "Unexpected response: 301"} -

Use the

DELETE /api/v1/organization/{orgname}/mirrorendpoint to delete the organization-level mirroring configuration:$ curl -X DELETE \ -H "Authorization: Bearer <bearer_token>" \ -H "Accept: application/json" \ https://<quay-server.example.com>/api/v1/organization/<orgname>/mirrorThis command does not return output in the CLI.

Enabling repository mirroring for Project Quay

To use mirrored repositories, you can set FEATURE_REPO_MIRROR to true in your config.yaml file. Restart the registry after you update the configuration.

-

To enable mirroring for repositories, set

FEATURE_REPO_MIRROR: truein yourconfig.yamlfile:# ... FEATURE_REPO_MIRROR: true REPO_MIRROR_INTERVAL: 30 REPO_MIRROR_SERVER_HOSTNAME: "openshift-quay-service" REPO_MIRROR_TLS_VERIFY: true REPO_MIRROR_ROLLBACK: false FEATURE_SPARSE_INDEX: true REPO_MIRROR_MAX_MANIFEST_LIST_SIZE: 10485760 REPO_MIRROR_MAX_MANIFEST_ENTRIES: 1000 # ...where:

FEATURE_REPO_MIRROR-

Specifies whether to enable or disable repository-level mirroring.

REPO_MIRROR_INTERVAL-

Specifies the worker processing interval in seconds.

REPO_MIRROR_SERVER_HOSTNAME-

Specifies the hostname of the server hosting the mirrored repository.

REPO_MIRROR_TLS_VERIFY-

Specifies whether to verify the TLS certificate of the mirrored repository.

REPO_MIRROR_ROLLBACK-

Specifies whether to rollback the repository if the mirroring fails.

FEATURE_SPARSE_INDEX-

Specifies whether to allow sparse manifest indexes.

REPO_MIRROR_MAX_MANIFEST_LIST_SIZE-

Specifies the maximum size of the manifest list in bytes.

REPO_MIRROR_MAX_MANIFEST_ENTRIES-

Specifies the maximum number of manifest entries to process.

-

Restart your Project Quay registry.

Creating a mirrored repository by using the UI

When mirroring a repository from an external container registry, you must create a new private repository. Typically, the same name is used as the target repository, for example, quay-rhel9.

-

You have set

FEATURE_REPO_MIRROR: truein yourconfig.yamlfile. -

For standalone Project Quay deployments, you have created a mirroring worker.

-

You have created a robot account.

-

Navigate to the Repositories page of your registry and click the name of a repository, for example, test-mirror.

-

Click Settings → Repository state.

-

Click Mirror.

-

Click the Mirroring tab and enter the details for connecting to the external registry, along with the tags, scheduling and access information:

-

Enter the details as required in the following fields:

-

Registry Location: The external repository you want to mirror, for example,

registry.redhat.io/quay/quay-rhel8. -

Tags: Enter a comma-separated list of individual tags or tag patterns. (See Tag Patterns section for details.)

-

Architecture Filter: Select the architectures that you want to mirror. For example, select

AMD64 (x86_64)to mirror only thex86_64architecture. By default, all architectures are mirrored. -

Start Date: The date on which mirroring begins. The current date and time is used by default.

-

Sync Interval: Defaults to syncing every 24 hours. You can change that based on hours or days.

-

Skopeo timeout interval: Defaults to

300seconds (5 minutes). The maximum timeout length is43200seconds (12 hours). -

Robot User: Create a new robot account or choose an existing robot account to do the mirroring.

-

Username: The username for accessing the external registry holding the repository you are mirroring.

-

Password: The password associated with the Username. Note that the password cannot include characters that require an escape character (\).

-

-

In the Advanced Settings section, you can optionally configure SSL/TLS and proxy with the following options:

-

Verify TLS: Select this option if you want to require HTTPS and to verify certificates when communicating with the target remote registry.

-

Accept Unsigned Images: Selecting this option allows unsigned images to be mirrored.

-

HTTP Proxy: Select this option if you want to require HTTPS and to verify certificates when communicating with the target remote registry.

-

HTTPS PROXY: Identify the HTTPS proxy server needed to access the remote site, if a proxy server is needed.

-

No Proxy: List of locations that do not require proxy.

-

-

After filling out all information, click Enable Mirror.

Starting a mirroring synchronization

To initiate a mirroring sync, you can navigate to the Mirroring tab of your repository and press the Sync Now button.

-

Navigate to the Mirroring tab of your repository or organization.

-

Press the Sync Now button.

-

Click the Logs tab to view available logs.

-

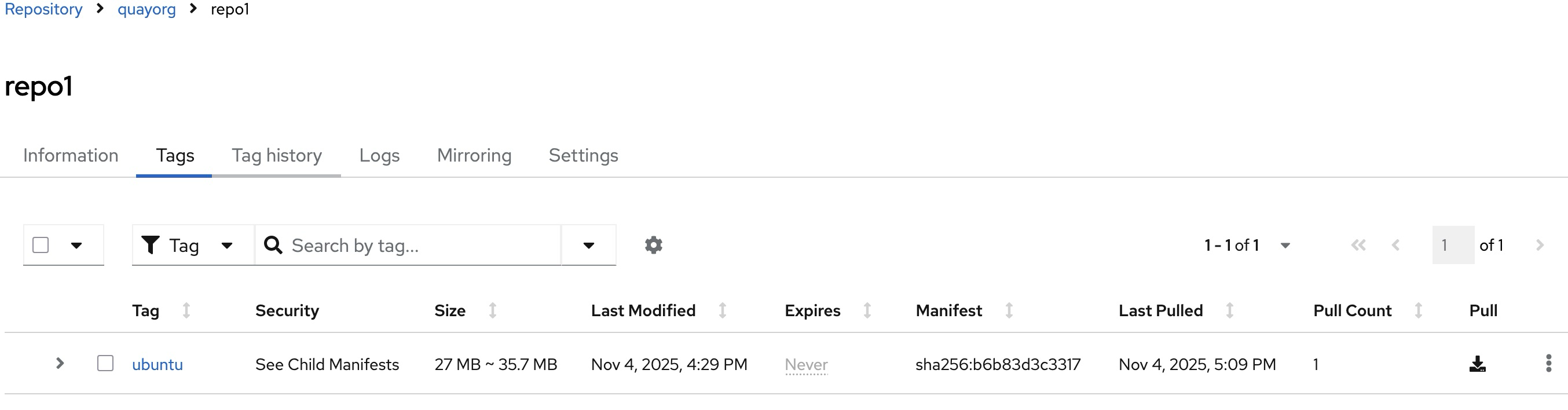

When mirroring is complete, the image appear in the Tags tab.

Event notifications for mirroring

There are three notification events for repository mirroring:

-

Repository Mirror Started

-

Repository Mirror Success

-

Repository Mirror Unsuccessful

The events can be configured inside of the Settings tab for each repository, and all existing notification methods such as email, Slack, Quay UI, and webhooks are supported.

Mirroring tag patterns

At least one tag must be entered. The following table references possible image tag patterns.

Pattern syntax

Pattern |

Description |

* |

Matches all characters |

? |

Matches any single character |

[seq] |

Matches any character in seq |

[!seq] |

Matches any character not in seq |

Example tag patterns

Example Pattern |

Example Matches |

v3* |

v32, v3.1, v3.2, v3.2-4beta, v3.3 |

v3.* |

v3.1, v3.2, v3.2-4beta |

v3.? |

v3.1, v3.2, v3.3 |

v3.[12] |

v3.1, v3.2 |

v3.[12]* |

v3.1, v3.2, v3.2-4beta |

v3.[!1]* |

v3.2, v3.2-4beta, v3.3 |

Working with mirrored repositories

After you have created a mirrored repository, there are several ways that you can work with that repository.

Select your mirrored repository from the Repositories page to do any of the following:

-

Enable/disable the repository: Select the Mirroring button in the left column, then toggle the Enabled check box to enable or disable the repository temporarily.

-

Check mirror logs: To make sure the mirrored repository is working properly, you can check the mirror logs. To do that, select the Usage Logs button in the left column. Here’s an example:

-

Sync mirror now: To immediately sync the images in your repository, select the Sync Now button.

-

Change credentials: To change the username and password, select DELETE from the Credentials line. Then select None and add the username and password needed to log into the external registry when prompted.

-

Cancel mirroring: To stop mirroring, which keeps the current images available but stops new ones from being synced, select the CANCEL button.

-

Set robot permissions: Project Quay robot accounts are named tokens that hold credentials for accessing external repositories. By assigning credentials to a robot, that robot can be used across multiple mirrored repositories that need to access the same external registry.

You can assign an existing robot to a repository by navigating to Organizations → Robot accounts. On this page, you can view the following information:

-

Check which repositories are assigned to that robot.

-

Assign

Read,WriteorAdminprivileges to that robot from the PERMISSION field.

-

-

Change robot credentials: Robots can hold credentials such as Kubernetes secrets, Docker login information, and Podman login information. To change robot credentials, select the Options gear on the robot’s account line on the Robot Accounts window and choose View Credentials. Add the appropriate credentials for the external repository the robot needs to access.

-

Check and change general setting: Select the Settings button (gear icon) from the left column on the mirrored repository page. On the resulting page, you can change settings associated with the mirrored repository. In particular, you can change User and Robot Permissions, to specify exactly which users and robots can read from or write to the repo.

Repository mirroring recommendations

Best practices for repository mirroring include the following:

-

Repository mirroring pods can run on any node. This means that you can run mirroring on nodes where Project Quay is already running.

-

Repository mirroring is scheduled in the database and runs in batches. As a result, repository workers check each repository mirror configuration file and reads when the next sync needs to be. More mirror workers means more repositories can be mirrored at the same time. For example, running 10 mirror workers means that a user can run 10 mirroring operators in parallel. If a user only has 2 workers with 10 mirror configurations, only 2 operators can be performed.

-

The optimal number of mirroring pods depends on the following conditions:

-

The total number of repositories to be mirrored

-

The number of images and tags in the repositories and the frequency of changes

-

Parallel batching

For example, if a user is mirroring a repository that has 100 tags, the mirror will be completed by one worker. Users must consider how many repositories one wants to mirror in parallel, and base the number of workers around that.

Multiple tags in the same repository cannot be mirrored in parallel.

-

IPv6 and dual-stack deployments

Your standalone Project Quay deployment can now be served in locations that only support IPv6, such as Telco and Edge environments. Support is also offered for dual-stack networking so your Project Quay deployment can listen on IPv4 and IPv6 simultaneously.

For a list of known limitations, see IPv6 limitations

Enabling the IPv6 protocol family

Use the following procedure to enable IPv6 support on your standalone Project Quay deployment.

-

Your host and container software platform (Docker, Podman) must be configured to support IPv6.

-

In your deployment’s

config.yamlfile, add theFEATURE_LISTEN_IP_VERSIONparameter and set it toIPv6, for example:--- FEATURE_GOOGLE_LOGIN: false FEATURE_INVITE_ONLY_USER_CREATION: false FEATURE_LISTEN_IP_VERSION: IPv6 FEATURE_MAILING: false FEATURE_NONSUPERUSER_TEAM_SYNCING_SETUP: false --- -

Start, or restart, your Project Quay deployment.

-

Check that your deployment is listening to IPv6 by entering the following command:

$ curl <quay_endpoint>/health/instance {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200}

After enabling IPv6 in your deployment’s config.yaml, all Project Quay features can be used as normal, so long as your environment is configured to use IPv6 and is not hindered by the ipv6-limitations[current limitations].

|

Warning

|

If your environment is configured to IPv4, but the |

Enabling the dual-stack protocol family

Use the following procedure to enable dual-stack (IPv4 and IPv6) support on your standalone Project Quay deployment.

-

Your host and container software platform (Docker, Podman) must be configured to support IPv6.

-

In your deployment’s

config.yamlfile, add theFEATURE_LISTEN_IP_VERSIONparameter and set it todual-stack, for example:--- FEATURE_GOOGLE_LOGIN: false FEATURE_INVITE_ONLY_USER_CREATION: false FEATURE_LISTEN_IP_VERSION: dual-stack FEATURE_MAILING: false FEATURE_NONSUPERUSER_TEAM_SYNCING_SETUP: false --- -

Start, or restart, your Project Quay deployment.

-

Check that your deployment is listening to both channels by entering the following command:

-

For IPv4, enter the following command:

$ curl --ipv4 <quay_endpoint> {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200} -

For IPv6, enter the following command:

$ curl --ipv6 <quay_endpoint> {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200}

-

After enabling dual-stack in your deployment’s config.yaml, all Project Quay features can be used as normal, so long as your environment is configured for dual-stack.

IPv6 and dual-stack limitations

-

Currently, attempting to configure your Project Quay deployment with the common Azure Blob Storage configuration will not work on IPv6 single stack environments. Because the endpoint of Azure Blob Storage does not support IPv6, there is no workaround in place for this issue.

For more information, see PROJQUAY-4433.

-

Currently, attempting to configure your Project Quay deployment with Amazon S3 CloudFront will not work on IPv6 single stack environments. Because the endpoint of Amazon S3 CloudFront does not support IPv6, there is no workaround in place for this issue.

For more information, see PROJQUAY-4470.

LDAP Authentication Setup for Project Quay

Lightweight Directory Access Protocol (LDAP) is an open, vendor-neutral, industry standard application protocol for accessing and maintaining distributed directory information services over an Internet Protocol (IP) network. Project Quay supports using LDAP as an identity provider.

Considerations when enabling LDAP

Prior to enabling LDAP for your Project Quay deployment, you should consider the following.

Existing Project Quay deployments

Conflicts between usernames can arise when you enable LDAP for an existing Project Quay deployment that already has users configured. For example, one user, alice, was manually created in Project Quay prior to enabling LDAP. If the username alice also exists in the LDAP directory, Project Quay automatically creates a new user, alice-1, when alice logs in for the first time using LDAP. Project Quay then automatically maps the LDAP credentials to the alice account. For consistency reasons, this might be erroneous for your Project Quay deployment. It is recommended that you remove any potentially conflicting local account names from Project Quay prior to enabling LDAP.

Manual User Creation and LDAP authentication

When Project Quay is configured for LDAP, LDAP-authenticated users are automatically created in Project Quay’s database on first log in, if the configuration option FEATURE_USER_CREATION is set to True. If this option is set to False, the automatic user creation for LDAP users fails, and the user is not allowed to log in. In this scenario, the superuser needs to create the desired user account first. Conversely, if FEATURE_USER_CREATION is set to True, this also means that a user can still create an account from the Project Quay login screen, even if there is an equivalent user in LDAP.

Configuring LDAP for Project Quay

You can configure LDAP for Project Quay by updating your config.yaml file directly and restarting your deployment. Use the following procedure as a reference when configuring LDAP for Project Quay.

-

Update your

config.yamlfile directly to include the following relevant information:# ... AUTHENTICATION_TYPE: LDAP (1) # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com (2) LDAP_ADMIN_PASSWD: ABC123 (3) LDAP_ALLOW_INSECURE_FALLBACK: false (4) LDAP_BASE_DN: (5) - dc=example - dc=com LDAP_EMAIL_ATTR: mail (6) LDAP_UID_ATTR: uid (7) LDAP_URI: ldap://<example_url>.com (8) LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,dc=<domain_name>,dc=com) (9) LDAP_USER_RDN: (10) - ou=people LDAP_SECONDARY_USER_RDNS: (11) - ou=<example_organization_unit_one> - ou=<example_organization_unit_two> - ou=<example_organization_unit_three> - ou=<example_organization_unit_four> # ...-

Required. Must be set to

LDAP. -

Required. The admin DN for LDAP authentication.

-

Required. The admin password for LDAP authentication.

-

Required. Whether to allow SSL/TLS insecure fallback for LDAP authentication.

-

Required. The base DN for LDAP authentication.

-

Required. The email attribute for LDAP authentication.

-

Required. The UID attribute for LDAP authentication.

-

Required. The LDAP URI.

-

Required. The user filter for LDAP authentication.

-

Required. The user RDN for LDAP authentication.

-

Optional. Secondary User Relative DNs if there are multiple Organizational Units where user objects are located.

-

-

After you have added all required LDAP fields, save the changes and restart your Project Quay deployment.

Enabling the LDAP_RESTRICTED_USER_FILTER configuration field

The LDAP_RESTRICTED_USER_FILTER configuration field is a subset of the LDAP_USER_FILTER configuration field. When configured, this option allows Project Quay administrators the ability to configure LDAP users as restricted users when Project Quay uses LDAP as its authentication provider.

Use the following procedure to enable LDAP restricted users on your Project Quay deployment.

-

Your Project Quay deployment uses LDAP as its authentication provider.

-

You have configured the

LDAP_USER_FILTERfield in yourconfig.yamlfile.

-

In your deployment’s

config.yamlfile, add theLDAP_RESTRICTED_USER_FILTERparameter and specify the group of restricted users, for example,members:# ... AUTHENTICATION_TYPE: LDAP # ... FEATURE_RESTRICTED_USERS: true (1) # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com LDAP_ADMIN_PASSWD: ABC123 LDAP_ALLOW_INSECURE_FALLBACK: false LDAP_BASE_DN: - o=<organization_id> - dc=<example_domain_component> - dc=com LDAP_EMAIL_ATTR: mail LDAP_UID_ATTR: uid LDAP_URI: ldap://<example_url>.com LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,o=<example_organization_unit>,dc=<example_domain_component>,dc=com) LDAP_RESTRICTED_USER_FILTER: (<filterField>=<value>) (2) LDAP_USER_RDN: - ou=<example_organization_unit> - o=<organization_id> - dc=<example_domain_component> - dc=com # ...-

Must be set to

Truewhen configuring an LDAP restricted user. -

Configures specified users as restricted users.

-

-

Start, or restart, your Project Quay deployment.

After enabling the LDAP_RESTRICTED_USER_FILTER feature, your LDAP Project Quay users are restricted from reading and writing content, and creating organizations.

Enabling the LDAP_SUPERUSER_FILTER configuration field

With the LDAP_SUPERUSER_FILTER field configured, Project Quay administrators can configure Lightweight Directory Access Protocol (LDAP) users as superusers if Project Quay uses LDAP as its authentication provider.

Use the following procedure to enable LDAP superusers on your Project Quay deployment.

-

Your Project Quay deployment uses LDAP as its authentication provider.

-

You have configured the

LDAP_USER_FILTERfield field in yourconfig.yamlfile.

-

In your deployment’s

config.yamlfile, add theLDAP_SUPERUSER_FILTERparameter and add the group of users you want configured as super users, for example,root:# ... AUTHENTICATION_TYPE: LDAP # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com LDAP_ADMIN_PASSWD: ABC123 LDAP_ALLOW_INSECURE_FALLBACK: false LDAP_BASE_DN: - o=<organization_id> - dc=<example_domain_component> - dc=com LDAP_EMAIL_ATTR: mail LDAP_UID_ATTR: uid LDAP_URI: ldap://<example_url>.com LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,o=<example_organization_unit>,dc=<example_domain_component>,dc=com) LDAP_SUPERUSER_FILTER: (<filterField>=<value>) (1) LDAP_USER_RDN: - ou=<example_organization_unit> - o=<organization_id> - dc=<example_domain_component> - dc=com # ...-

Configures specified users as superusers.

-

-

Start, or restart, your Project Quay deployment.

After enabling the LDAP_SUPERUSER_FILTER feature, your LDAP Project Quay users have superuser privileges. The following options are available to superusers:

-

Manage users

-

Manage organizations

-

Manage service keys

-

View the change log

-

Query the usage logs

-

Create globally visible user messages

Common LDAP configuration issues

The following errors might be returned with an invalid configuration.

-

Invalid credentials. If you receive this error, the Administrator DN or Administrator DN password values are incorrect. Ensure that you are providing accurate Administrator DN and password values.

-

*Verification of superuser %USERNAME% failed. This error is returned for the following reasons:

-

The username has not been found.

-

The user does not exist in the remote authentication system.

-

LDAP authorization is configured improperly.

-

-

Cannot find the current logged in user. When configuring LDAP for Project Quay, there may be situations where the LDAP connection is established successfully using the username and password provided in the Administrator DN fields. However, if the current logged-in user cannot be found within the specified User Relative DN path using the UID Attribute or Mail Attribute fields, there are typically two potential reasons for this:

-

The current logged in user does not exist in the User Relative DN path.

-

The Administrator DN does not have rights to search or read the specified LDAP path.