To upgrade Project Quay, follow the procedure that matches your installation type. You can use the Operator with the Operator Lifecycle Manager (OLM) for Operator-based installations, or the standalone procedure for proof of concept or highly available setups.

Upgrading the Project Quay Operator Overview

The Project Quay Operator uses synchronized versioning: each Operator version deploys a single, matching version of Project Quay and its components. You can use this scheme to plan upgrades and keep components compatible.

|

Note

|

There is no field on the |

Operator Lifecycle Manager

Operator Lifecycle Manager (OLM) installs and upgrades the Project Quay Operator. You can use automatic or manual approval in the Subscription to control when new Operator versions are applied.

|

Warning

|

When the Project Quay Operator is installed by Operator Lifecycle Manager, it might be configured to support automatic or manual upgrades. This option is shown on the OperatorHub page for the Project Quay Operator during installation. It can also be found in the Project Quay Operator |

Upgrading the Project Quay Operator

To upgrade the Project Quay Operator, use the standard OpenShift Container Platform process for installed Operators and follow N-1 minor version paths.

In general, Project Quay supports upgrades from a prior (N-1) minor version only. For example, upgrading directly from Project Quay 3.9 to the latest version of 3.18 is not supported. Instead, users would have to upgrade as follows:

-

3.9.z → 3.10.z

-

3.10.z → 3.11.z

-

3.11.z → 3.14.z

-

3.14.z → 3.16.z

-

3.16.z → 3.17.z

This is required to ensure that any necessary database migrations are done correctly and in the right order during the upgrade.

In some cases, Project Quay supports direct, single-step upgrades from prior (N-2, N-3) minor versions. This simplifies the upgrade procedure for customers on older releases. The following upgrade paths are supported for Project Quay {productmin}:

-

3.15.z → {productmin}

-

3.16.z → {productmin}

-

3.17.z → {productmin}

Upgrading Project Quay to version {productmin}

To upgrade Project Quay to the next version, change the Operator update channel in the OpenShift Container Platform Web Console and wait for the upgrade pods to complete. You can then verify the database images and access your registry.

-

In the OpenShift Container Platform Web Console, navigate to Operators → Installed Operators.

-

Click on the Project Quay Operator.

-

Navigate to the Subscription tab.

-

Under Subscription details click Update channel.

-

Select stable-3.18 → Save.

-

Check the progress of the new installation under Upgrade status. Wait until the upgrade status changes to 1 installed before proceeding.

-

In your OpenShift Container Platform cluster, navigate to Workloads → Pods. Existing pods should be terminated, or in the process of being terminated.

-

Wait for the following pods, which are responsible for upgrading the database and alembic migration of existing data, to spin up:

clair-postgres-upgrade,quay-postgres-upgrade, andquay-app-upgrade. -

After the

clair-postgres-upgrade,quay-postgres-upgrade, andquay-app-upgradepods are marked as Completed, the remaining pods for your Project Quay deployment spin up. This takes approximately ten minutes. -

Verify that the

quay-databaseuses thepostgresql-13image, andclair-postgrespods now uses thepostgresql-15image. -

After the

quay-apppod is marked as Running, you can reach your Project Quay registry.

Upgrading to the next minor release version

Z-stream upgrades, for example, 3.13.1 → 3.13.2, for Project Quay use your existing channel and approval strategy. With Automatic approval, the Operator applies new z-stream updates with little or no downtime; with Manual approval, you approve each update first.

Manually approving a pending Operator upgrade

To approve a pending Project Quay Operator upgrade when using Manual approval, open the Subscription tab, review the install plan and resources, and click Approve. You can then monitor the upgrade progress on the Installed Operators page.

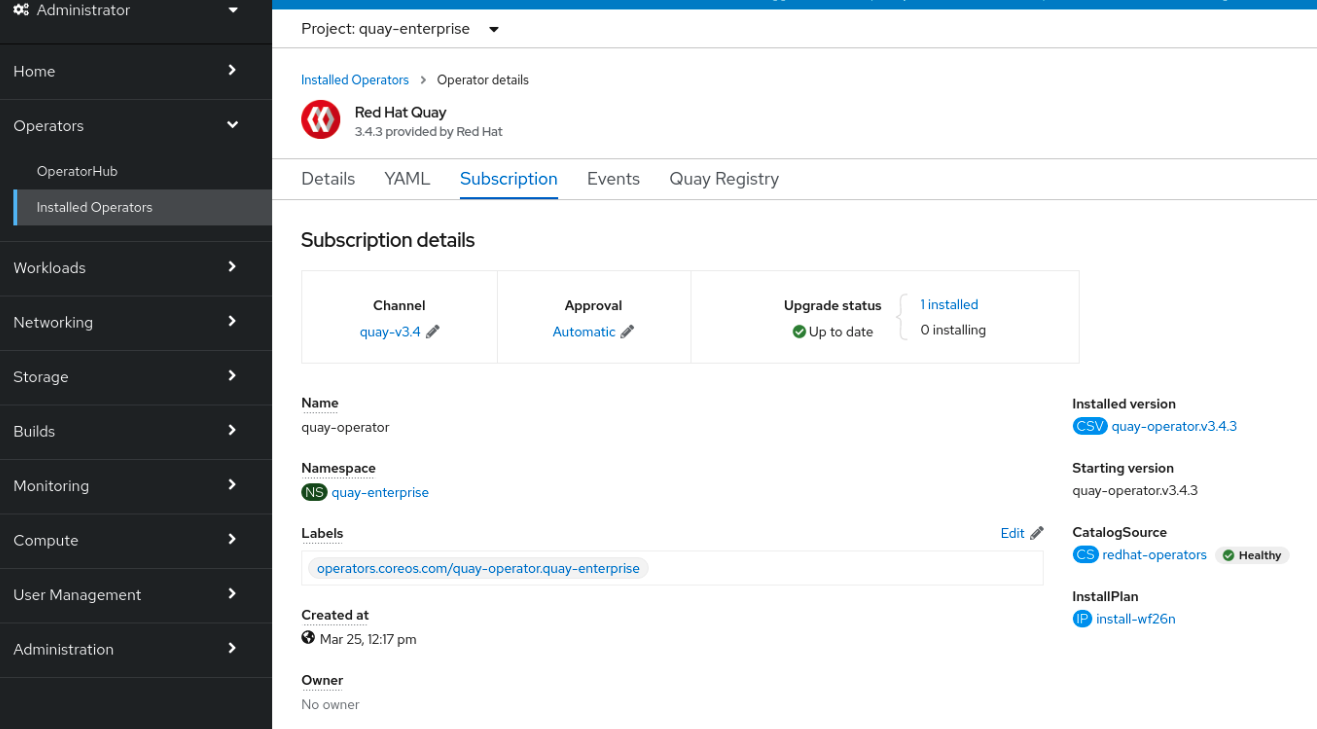

The following image shows the Subscription tab in the UI, including the update Channel, the Approval strategy, the Upgrade status and the InstallPlan:

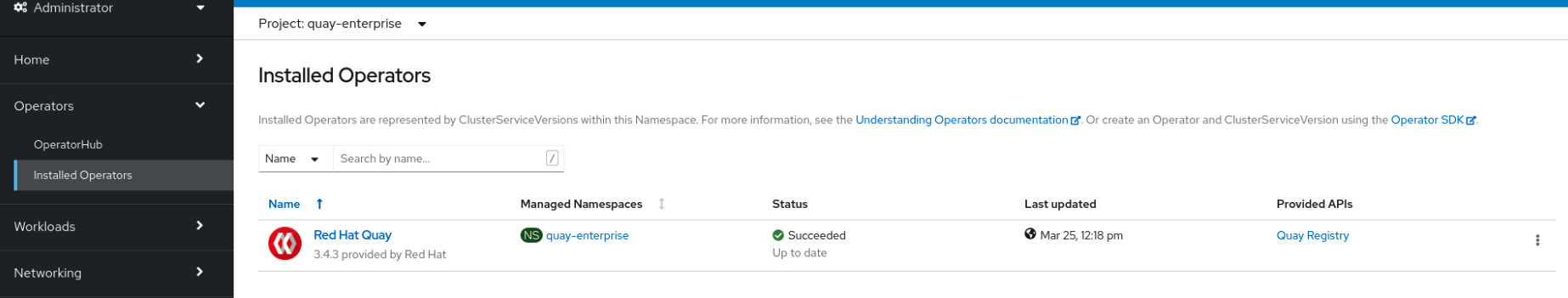

The list of Installed Operators provides a high-level summary of the current Quay installation:

Upgrading a QuayRegistry resource

The Project Quay Operator reconciles QuayRegistry resources and upgrades them when the Operator version differs from the current version. When an upgrade is supported, the Operator applies it and updates status; when not, it returns an error and leaves the QuayRegistry unchanged.

The following logic is used:

-

If

status.currentVersionis unset, reconcile as normal. -

If

status.currentVersionequals the Operator version, reconcile as normal. -

If

status.currentVersiondoes not equal the Operator version, check if it can be upgraded. If it can, perform upgrade tasks and set thestatus.currentVersionto the Operator’s version once complete. If it cannot be upgraded, return an error and leave theQuayRegistryand its deployed Kubernetes objects alone.

Upgrading a QuayEcosystem

To migrate an existing QuayEcosystem to a QuayRegistry managed by the Project Quay Operator, add the migration label to the QuayEcosystem custom resource and wait for the new QuayRegistry to start. You can then verify the migration and delete the old QuayEcosystem.

-

Add

"quay-operator/migrate": "true"to themetadata.labelsof theQuayEcosystem.$ oc edit quayecosystem <quayecosystem_name>metadata: labels: quay-operator/migrate: "true" -

Wait for a

QuayRegistryCR to be created with the samemetadata.nameas yourQuayEcosystem. TheQuayEcosystemCR is marked with the label"quay-operator/migration-complete": "true". -

After the

status.registryEndpointof the newQuayRegistryis set, access Project Quay and confirm that all data and settings were migrated successfully. -

If everything works correctly, you can delete the

QuayEcosystem. Kubernetes garbage collection cleans up all old resources.

Reverting QuayEcosystem Upgrade

To revert to the QuayEcosystem when an upgrade to QuayRegistry fails or causes issues, delete the QuayRegistry and restore the Route to the original Service. You can then use the Project Quay deployment managed by the QuayEcosystem.

|

Note

|

If your |

-

Delete the

QuayRegistryusing either the UI orkubectl:$ kubectl delete -n <namespace> quayregistry <quayecosystem-name> -

If external access was provided using a

Route, change theRouteto point back to the originalServiceusing the UI orkubectl.

Supported QuayEcosystem Configurations for Upgrades

The Project Quay Operator reports errors in its logs and in status.conditions if migrating a QuayEcosystem component fails or is unsupported.

All unmanaged components should migrate successfully because no Kubernetes resources need to be adopted and all the necessary values are already provided in Project Quay’s config.yaml file.

- Database

-

Ephemeral database not supported (

volumeSizefield must be set). - Redis

-

Nothing special needed.

- External Access

-

Only passthrough

Routeaccess is supported for automatic migration. Manual migration required for other methods.-

LoadBalancerwithout custom hostname: After theQuayEcosystemis marked with label"quay-operator/migration-complete": "true", delete themetadata.ownerReferencesfield from existingServicebefore deleting theQuayEcosystemto prevent Kubernetes from garbage collecting theServiceand removing the load balancer. A newServicewill be created withmetadata.nameformat<QuayEcosystem-name>-quay-app. Edit thespec.selectorof the existingServiceto match thespec.selectorof the newServiceso traffic to the old load balancer endpoint will now be directed to the new pods. You are now responsible for the oldService; the Quay Operator will not manage it. -

LoadBalancer/NodePort/Ingresswith custom hostname: A newServiceof typeLoadBalancerwill be created withmetadata.nameformat<QuayEcosystem-name>-quay-app. Change your DNS settings to point to thestatus.loadBalancerendpoint provided by the newService.

-

- Clair

-

Nothing special needed.

- Object Storage

-

QuayEcosystemdid not have a managed object storage component, so object storage will always be marked as unmanaged. Local storage is not supported. - Repository Mirroring

-

Nothing special needed.

Standalone Project Quay upgrade

To upgrade a standalone Project Quay and Clair deployment, follow the procedure for your current version in sequential order. You stop the containers, back up the database and storage, then start the new Clair and Project Quay images.

In general, single-step upgrades from prior (N-2, N-3) minor versions. This helps simplify the upgrade procedure for customers on older releases. The following upgrade paths are supported for Project Quay {productmin}:

-

3.15.z → {productmin}

-

3.16.z → {productmin}

-

3.17.z → {productmin}

Upgrading to 3.18 from releases older than those listed above is unsupported. This helps ensure that any necessary database migrations are done correctly and in the right order during the upgrade.

For users wanting to upgrade the Project Quay Operator, see Upgrading the Project Quay Operator Overview.

This document describes the steps needed to perform each individual upgrade. Determine your current version and then follow the steps in sequential order, starting with your current version and working up to your desired target version.

See the Project Quay Release Notes for information on features for individual releases.

The general procedure for a manual upgrade consists of the following steps:

-

Stop the

QuayandClaircontainers. -

Backup the database and image storage (optional but recommended).

-

Start Clair using the new version of the image.

-

Wait until Clair is ready to accept connections before starting the new version of Project Quay.

Accessing images

To access Project Quay and Clair images for standalone upgrades, pull from registry.redhat.io or registry.access.redhat.com and configure authentication as described in Red Hat Container Registry Authentication.

Project Quay image from version 3.4.0 and later are available from registry.redhat.io and registry.access.redhat.com, with authentication set up as described in Red Hat Container Registry Authentication.

Upgrade to v3.18.0 from 3.17.z

-

Quay: quay.io/projectquay/quay:v3.18.0

-

Clair: quay.io/projectquay/clair:4.9

-

PostgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

-

Redis: centos/redis-32-centos7@sha256:06dbb609484330ec6be6090109f1fa16e936afcf975d1cbc5fff3e6c7cae7542

-

Clair-PosgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

Upgrade to v3.18.0 from 3.16.z

-

Quay: quay.io/projectquay/quay:v3.18.0

-

Clair: quay.io/projectquay/clair:4.9

-

PostgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

-

Redis: centos/redis-32-centos7@sha256:06dbb609484330ec6be6090109f1fa16e936afcf975d1cbc5fff3e6c7cae7542

-

Clair-PosgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

Upgrade to v3.18.0 from 3.15.z

-

Quay: quay.io/projectquay/quay:v3.18.0

-

Clair: quay.io/projectquay/clair:4.9

-

PostgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

-

Redis: centos/redis-32-centos7@sha256:06dbb609484330ec6be6090109f1fa16e936afcf975d1cbc5fff3e6c7cae7542

-

Clair-PosgreSQL: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33

Upgrading a geo-replication deployment of standalone Project Quay

To upgrade a geo-replication deployment of standalone Project Quay, stop operations on all instances, back up the deployment, then follow the procedure to upgrade each system. Expect intermittent downtime when upgrading to the next y-stream release.

|

Important

|

|

|

Note

|

This procedure assumes that you are running Project Quay services on three (or more) systems. For more information, see Preparing for Project Quay high availability. |

-

You have logged into

registry.redhat.io

-

Obtain a list of all Project Quay instances on each system running a Project Quay instance.

-

Enter the following command on System A to reveal the Project Quay instances:

$ sudo podman psExample outputCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES ec16ece208c0 registry.redhat.io/quay/quay-rhel8:v{producty-n1} registry 6 minutes ago Up 6 minutes ago 0.0.0.0:80->8080/tcp, 0.0.0.0:443->8443/tcp quay01 -

Enter the following command on System B to reveal the Project Quay instances:

$ sudo podman psExample outputCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 7ae0c9a8b37d registry.redhat.io/quay/quay-rhel8:v{producty-n1} registry 5 minutes ago Up 2 seconds ago 0.0.0.0:82->8080/tcp, 0.0.0.0:445->8443/tcp quay02 -

Enter the following command on System C to reveal the Project Quay instances:

$ sudo podman psExample outputCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES e75c4aebfee9 registry.redhat.io/quay/quay-rhel8:v{producty-n1} registry 4 seconds ago Up 4 seconds ago 0.0.0.0:84->8080/tcp, 0.0.0.0:447->8443/tcp quay03

-

-

Temporarily shut down all Project Quay instances on each system.

-

Enter the following command on System A to shut down the Project Quay instance:

$ sudo podman stop ec16ece208c0 -

Enter the following command on System B to shut down the Project Quay instance:

$ sudo podman stop 7ae0c9a8b37d -

Enter the following command on System C to shut down the Project Quay instance:

$ sudo podman stop e75c4aebfee9

-

-

Obtain the latest Project Quay version, for example, Project Quay 3.18, on each system.

-

Enter the following command on System A to obtain the latest Project Quay version:

$ sudo podman pull registry.redhat.io/quay/quay-rhel8:{productminv} -

Enter the following command on System B to obtain the latest Project Quay version:

$ sudo podman pull registry.redhat.io/quay/quay-rhel8:v{producty} -

Enter the following command on System C to obtain the latest Project Quay version:

$ sudo podman pull registry.redhat.io/quay/quay-rhel8:{productminv}

-

-

On System A of your highly available Project Quay deployment, run the new image version, for example, Project Quay 3.18:

# sudo podman run --restart=always -p 443:8443 -p 80:8080 \ --sysctl net.core.somaxconn=4096 \ --name=quay01 \ -v /mnt/quay/config:/conf/stack:Z \ -v /mnt/quay/storage:/datastorage:Z \ -d registry.redhat.io/quay/quay-rhel8:{productminv} -

Wait for the new Project Quay container to become fully operational on System A. You can check the status of the container by entering the following command:

$ sudo podman psExample outputCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 70b9f38c3fb4 registry.redhat.io/quay/quay-rhel8:v{producty} registry 2 seconds ago Up 2 seconds ago 0.0.0.0:82->8080/tcp, 0.0.0.0:445->8443/tcp quay01 -

Optional: Ensure that Project Quay is fully operation by navigating to the Project Quay UI.

-

After ensuring that Project Quay on System A is fully operational, run the new image versions on System B and on System C.

-

On System B of your highly available Project Quay deployment, run the new image version, for example, Project Quay 3.18:

# sudo podman run --restart=always -p 443:8443 -p 80:8080 \ --sysctl net.core.somaxconn=4096 \ --name=quay02 \ -v /mnt/quay/config:/conf/stack:Z \ -v /mnt/quay/storage:/datastorage:Z \ -d registry.redhat.io/quay/quay-rhel8:{productminv} -

On System C of your highly available Project Quay deployment, run the new image version, for example, Project Quay 3.18:

# sudo podman run --restart=always -p 443:8443 -p 80:8080 \ --sysctl net.core.somaxconn=4096 \ --name=quay03 \ -v /mnt/quay/config:/conf/stack:Z \ -v /mnt/quay/storage:/datastorage:Z \ -d registry.redhat.io/quay/quay-rhel8:{productminv}

-

-

You can check the status of the containers on System B and on System C by entering the following command:

$ sudo podman ps

Upgrading a geo-replication deployment of Red Hat Quay on OpenShift Container Platform

To upgrade your geo-replicated Red Hat Quay on OpenShift Container Platform deployment, you must stop operations, scale down secondary systems, upgrade the primary system, then upgrade secondary systems. This ensures a safe upgrade process with minimal downtime across your geo-replicated registry.

|

Important

|

|

The following procedure assumes that you are running the Project Quay registry on three or more systems. For this procedure, three systems named System A, System B, and System C are used. System A serves as the primary system in which the Project Quay Operator is deployed.

-

On System B and System C, scale down your Project Quay registry. This is done by disabling auto scaling and overriding the replica county for Project Quay, mirror workers, and Clair if it is managed. Use the following

quayregistry.yamlfile as a reference:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: false - kind: quay managed: true overrides: replicas: 0 - kind: clair managed: true overrides: replicas: 0 - kind: mirror managed: true overrides: replicas: 0 …where:

managed: false::Disables auto scaling ofQuay,ClairandMirroringworkersoverrides::Sets the replica count to 0 for components accessing the database and objectstorageNoteYou must keep the Project Quay registry running on System A. Do not update the

quayregistry.yamlfile on System A. -

Wait for the

registry-quay-app,registry-quay-mirror, andregistry-clair-apppods to disappear. Enter the following command to check their status:oc get pods -n <quay-namespace>Example outputquay-operator.v3.7.1-6f9d859bd-p5ftc 1/1 Running 0 12m quayregistry-clair-postgres-7487f5bd86-xnxpr 1/1 Running 1 (12m ago) 12m quayregistry-quay-app-upgrade-xq2v6 0/1 Completed 0 12m quayregistry-quay-redis-84f888776f-hhgms 1/1 Running 0 12m -

On System A, initiate a Project Quay upgrade to the latest y-stream version. This is a manual process. For more information about upgrading installed Operators, see Upgrading installed Operators. For more information about Project Quay upgrade paths, see Upgrading the Project Quay Operator.

-

After the new Project Quay registry is installed, the necessary upgrades on the cluster are automatically completed. Afterwards, new Project Quay pods are started with the latest y-stream version. Additionally, new

Quaypods are scheduled and started. -

Confirm that the update has properly worked by navigating to the Project Quay UI:

-

In the OpenShift console, navigate to Operators → Installed Operators, and click the Registry Endpoint link.

ImportantDo not execute the following step until the Project Quay UI is available. Do not upgrade the Project Quay registry on System B and on System C until the UI is available on System A.

-

-

Confirm that the update has properly worked on System A, initiate the Project Quay upgrade on System B and on System C. The Operator upgrade results in an upgraded Project Quay installation, and the pods are restarted.

NoteBecause the database schema is correct for the new y-stream installation, the new pods on System B and on System C should quickly start.

-

After updating, revert the changes made in step 1 of this procedure by removing

overridesfor the components. For example:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: true - kind: quay managed: true - kind: clair managed: true - kind: mirror managed: true …where:

kind: horizontalpodautoscaler::Set this resource toTrueif thehorizontalpodautoscalerresource was set toTruebefore the upgrade procedure, or if you want Project Quay to scale in case of a resource shortage.

Upgrading the Quay Bridge Operator

To receive the latest Project Quay upgrades and features, you can upgrade the Quay Bridge Operator by changing the update channel in the operator subscription.

-

You have installed Project Quay.

-

You are logged in to OpenShift Container Platform as a cluster administrator.

-

On the OpenShift Container Platform web console, click Operators → Installed Operators → Quay Bridge Operator.

-

Click the Subscription tab.

-

Under Update channel, click stable-3.15 → Save.

Downgrading Project Quay

Project Quay only supports rolling back, or downgrading, to previous z-stream versions, for example, 3.12.3 → 3.12.2. Rolling back to previous y-stream versions (3.18 → {producty-n1}) is not supported. This is because Project Quay updates might contain database schema upgrades that are applied when upgrading to a new version of Project Quay. Database schema upgrades are not considered backwards compatible.

|

Important

|

Downgrading to previous z-streams is neither recommended nor supported by either Operator based deployments or virtual machine based deployments. Downgrading should only be done in extreme circumstances. The decision to rollback your Project Quay deployment must be made in conjunction with the Project Quay support and development teams. For more information, contact Project Quay support. |